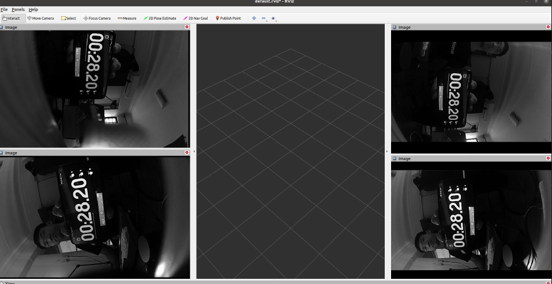

Visual Positioning:

Pushing the Limits of Localization

Satellite positioning (GNSS) is core to many of the applications we use in our personal and professional lives. Your applications rely on positioning for navigation, or to calculate your distance from nearby restaurants, when checking the weather, or when using Find my Device. But GNSS isn't just limited to personal use. It's used by businesses, emergency services, and even the military to coordinate and track movements. These applications are various but some key examples again include autonomous navigation, fleet management and Device Location.

This technology is all around us, so it might come as some surprise that the operational scope of GNSS critical applications is limited to open sky environments. Signals are not able to penetrate reliably through building structures, or suffer from signal attenuation and reflections, making indoor and underground positioning by GNSS unreliable.

Visual Positioning Systems (VPS) provide an effective solution for localisation both with and without satellite connectivity. In this way, VILOTA's VPS is extending the accessible operational domain for navigation, fleet management, and autonomous vehicles using Visual Locational data.

Vision-Kit-Lite

Camera based perception sometimes requires a large perception and embedded team. Our modularised sensor hardware and onboard software can better support robot manufacturers by speeding up their development process, lowering the barriers to adopting vision technologies.

Vision-Kit-Lite is a credit-card size perception and localization module, suitable for drones and small robots. It has a 360 degrees field of view, permitting navigation, tracking, and monitoring. For more information on VKL7 & VKL12, VILOTA's initial market offering, please download our spec sheet.

Visual Positioning System for GPS-Denied Environments

Visual Positioning Systems work by combining information from multiple cameras and sensors to estimate the motion and position of a camera assembly, robot or vehicle.

Synchronized and calibrated stereo cameras provide visual data that allows the system to track features in the environment and calculate how much the cameras have moved. Meanwhile, the inertial sensors provide information about the vehicle's acceleration and rotation, which provides an alternative estimation of motion and orientation.

By fusing these two sources of information, VPS can estimate the pose of a robot or vehicle in real-time, even in challenging environments. By combining these multiple sources of information, VILOTA's algorithms estimate the position and orientation of our devices with minimal drift, allowing navigation and positioning or the output of a coordinate.

Stereo Depth Map & Point Cloud

A depth map is a type of map used in robot navigation to represent the environment in terms of the occupancy and distance information. Each cell contains information about whether it is occupied by an obstacle or free space, and a unit of value representing the distance from the measuring device. Occupancy depth maps are commonly used in navigation and perception tasks, such as obstacle avoidance, path planning, and localization. By using the occupancy and distance information from the map, robots can make more informed decisions about how to navigate and interact with the environment.

A Point Cloud is a type of 3D data representation that is widely used in mapping and surveying applications. It consists of a collection of points in 3D space, each with its own set of coordinates and attributes such as colour or reflectance, generated by our visual sensing devices.

Stereo cameras are an alternative to LiDAR and photogrammetry and can be used to generate models of the environment. They are particularly useful for creating accurate and detailed maps of terrain, buildings, and other structures. By analysing the data from point clouds, mapping professionals can extract valuable insights and information about the environment that can inform decision-making in a variety of industries, including urban planning, construction, and environmental monitoring.

Calibration & Synchronisation

VPS relies on the accurate estimation of the camera's motion by combining information from the camera images and the onboard inertial sensors. Performance is crucially dependent on the synchronisation and calibration of sensors, as is robust hardware suitable for specific operational domains.

Manufacturer synchronization of stereo cameras ensures that the images captured by the left and right cameras correspond to the same point in time. If the images from the two cameras are not synchronized with the IMU, the resulting 3D reconstruction of the scene may be inaccurate, and the trajectory estimate of the camera's movement may be incorrect.

Automatic calibration ensures accurate mapping of the 2D image points onto 3D world coordinates. This includes estimating intrinsic parameters such as focal length, distortion coefficients, and principal point, as well as extrinsic parameters such as camera position and orientation with respect to the world coordinate frame.

Luxonis Products

BESPOKE SOLUTIONS FOR CLIENTS

Visual Positioning Systems, or VPS for short, is a cross-industry game changer. Each of our clients has specific operational needs and our services include full customization and integration of VILOTA into their equipment, fleet management, and mission planners. Our technology can provide:

- Camera Calibration - Our cameras are pre-calibrated to perfection for accurate localization.

- Sensor Fusion - Full fusion of all of your equipment-mounted sensors for a complete data set of your positioning.

- Real-Time Data - Receive the data you need in real-time.

- GPS Augmentation - Enhancement of your device's GPS. VILOTA knows when to switch between positional data sets.

- Robust VIO Algorithms - Minimum drift positional data with loop closure capabilities even in low light environments.

We support customer-oriented development projects to introduce the power of VIO through our VPS systems. Please contact us through the contact form below to inquire about a suitable VPS solution for your industry need.

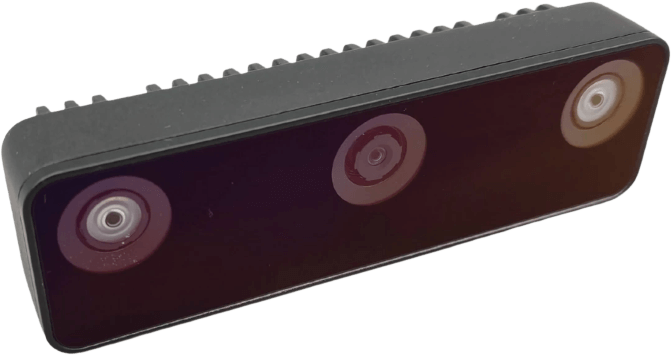

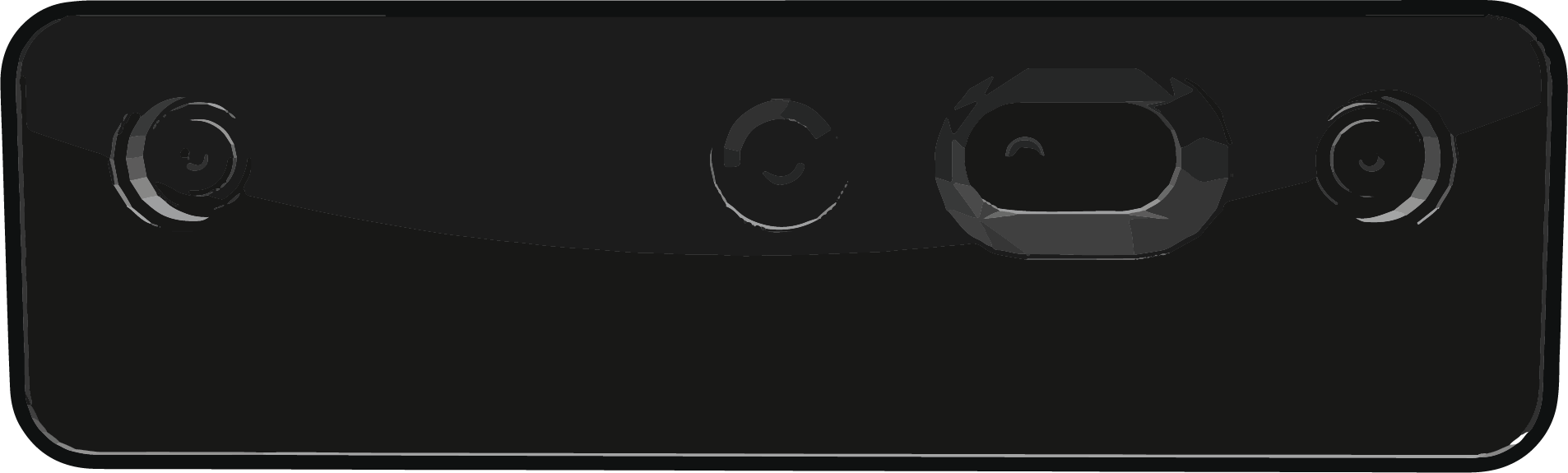

OAK-D W

The OAK-D W is the variant of the OAK-D S2 but with a wider field of view (DFOV is ~150°). OAK-D W doesn’t have a barrel jack for supplying power, so it only has a USB-C connector for both power and communication.

OAK-D Pro

The OAK-D Pro features an IR laser dot projector (active stereo) and IR illumination LED (for “night vision”).

OAK-D S2

The OAK-D S2 is the series 2 of the OAK-D. The main difference is that OAK-D S2 has a smaller enclosure and is therefore lighter. OAK-D S2 also doesn’t have a barrel jack for supplying power, so it only has a USB-C connector for both power and communication.